|

SkePU

1.2

|

The main namespace for SkePU library. More...

Classes | |

| class | Generate |

| A class representing the Generate skeleton. More... | |

| class | Map |

| A class representing the Map skeleton. More... | |

| class | MapArray |

| A class representing the MapArray skeleton. More... | |

| class | MapOverlap |

| A class representing the MapOverlap skeleton. More... | |

| class | MapReduce |

| A class representing the MapReduce skeleton. More... | |

| class | Matrix |

| A matrix container class (2D matrix), internally uses 1D container (std::vector) to store elements in a contiguous memory allocations. More... | |

| class | Reduce |

| A class representing the Reduce skeleton both for 1D and 2D reduce operation for 1D Vector, 2D Dense Matrix/Sparse matrices. More... | |

| class | Reduce< ReduceFunc, ReduceFunc > |

| A specilalization of above class, used for 1D Reduce operation. Please note that the class name is same. The only difference is how you instantiate it either by passing 1 user function (i.e. 1D reduction) or 2 user function (i.e. 2D reduction). See code examples for more information. More... | |

| class | Scan |

| A class representing the Scan skeleton. More... | |

| class | SparseMatrix |

| A sparse matrix container class that mainly stores its data in CSR format. More... | |

| class | MapOverlap2D |

| A class representing the MapOverlap skeleton for 2D overlap for Matrix operands (useful for convolution and stencil computation). More... | |

| class | DataCollector2D |

| A class that can be used to collect 2D data. More... | |

| class | DeviceAllocations_CU |

| class | Device_CL |

| A class representing an OpenCL device. More... | |

| class | Device_CU |

| A class representing a CUDA device. More... | |

| class | DeviceMemPointer_CL |

| A class representing an OpenCL device memory allocation for container. More... | |

| struct | UpdateInf |

| class | DeviceMemPointer_CU |

| A class representing a CUDA device memory allocation for container. More... | |

| struct | openclGenProp |

| struct | openclDeviceProp |

| class | EnvironmentDestroyer |

| A class that is used to properly deallocate singelton object of Environment class. More... | |

| class | Environment |

| A class representing a execution environment. More... | |

| struct | BackEndParams |

| Can be used to specify properties for a backend. More... | |

| class | ExecPlan |

| A class that describes an execution plan. More... | |

| class | TimerLinux_GTOD |

| A class that can be used measure time on Linux systems. More... | |

| struct | ExtraData |

| Any extra information that User want to pass to the function wrapper for implementations can be specified here.... More... | |

| struct | Point |

| struct | Node |

| class | Trainer |

| end Node class... More... | |

| class | TuneData |

| A class that can be used to collect tuning data. More... | |

| class | DeviceMemPointer_Matrix_CL |

| A class representing an OpenCL device memory allocation for Matrix container. Not used much right now. More... | |

| class | DeviceMemPointer_Matrix_CU |

| A class representing a CUDA 2D device memory allocation for Matrix container. More... | |

| class | Threads |

| class | ThreadPool |

| ThreadPool class manages all the ThreadPool related activities. This includes keeping track of idle threads and synchronizations between all threads. More... | |

| struct | Tuner |

| Tuner class: generic definition.... Multiple class specializations are defined for this class, one for each skeleton type. It allows to avoid possible compiler errors considering differences in function arguments for different skeleton types. More... | |

| struct | Tuner< StructType, MAPREDUCE, StructType2 > |

| Tuner class specilization for MapReduce skeleton. More... | |

| struct | Tuner< StructType, MAP, StructType > |

| Tuner class specilization for Map skeleton. More... | |

| struct | Tuner< StructType, REDUCE, StructType > |

| Tuner class specilization for Reduce skeleton. More... | |

| struct | Tuner< StructType, MAPARRAY, StructType > |

| Tuner class specilization for MapArray skeleton. More... | |

| struct | Tuner< StructType, MAPOVERLAP, StructType > |

| Tuner class specilization for MapOverlap skeleton. More... | |

| class | Vector |

| A vector container class, implemented as a wrapper for std::vector. More... | |

Enumerations | |

| enum | OverlapPolicy |

| enum | AccessType |

| Can be used to specify whether the access is row-wise or column-wise. More... | |

| enum | ReducePolicy |

| Can be used to specify the direction of reduce for 2D containers. More... | |

| enum | ScanType |

| enum | SparseFileFormat |

| Can be used to specify the input format for a sparse matrix that is supplied in constructor. More... | |

| enum | BackEnd |

| Can be used to specify which backend to use. | |

Functions | |

| double | testHostToDeviceTransfer (unsigned int memSize, memoryMode memMode, bool wc) |

| test the bandwidth of a host to device memcopy of a specific size | |

| double | testDeviceToDeviceTransfer (unsigned int memSize) |

| test the bandwidth of a device to device memcopy of a specific size | |

| DevTimingStruct | measureOrLoadCUDABandwidth (int gpuId, bool pinnedMemory=false) |

| template<typename T > | |

| std::string | getDataTypeCL () |

| template<> | |

| std::string | getDataTypeCL< int > () |

| template<> | |

| std::string | getDataTypeCL< unsigned int > () |

| template<> | |

| std::string | getDataTypeCL< long > () |

| template<> | |

| std::string | getDataTypeCL< float > () |

| template<> | |

| std::string | getDataTypeCL< double > () |

| bool | cudaPeerToPeerMemAccess (int gpuId1, int gpuId2) |

| static std::string | TransposeKernelNoBankConflicts_CL ("__kernel void transposeNoBankConflicts(__global TYPE* odata, __global TYPE* idata, int width, int height, __local TYPE* sdata)\n""{\n"" int xIndex = get_group_id(0) * TILEDIM + get_local_id(0);\n"" int yIndex = get_group_id(1) * TILEDIM + get_local_id(1);\n"" int index_in = xIndex + (yIndex)*width;\n"" if(xIndex<width && yIndex<height)\n"" sdata[get_local_id(1)*TILEDIM+get_local_id(0)] = idata[index_in];\n"" xIndex = get_group_id(1) * TILEDIM + get_local_id(0);\n"" yIndex = get_group_id(0) * TILEDIM + get_local_id(1);\n"" int index_out = xIndex + (yIndex)*height;\n"" \n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(xIndex<height && yIndex<width)\n"" odata[index_out] = sdata[get_local_id(0)*TILEDIM+get_local_id(1)];\n""}\n") |

| static std::string | GenerateKernel_CL ("__kernel void GenerateKernel_KERNELNAME(__global TYPE* output, size_t numElements, size_t indexOffset, CONST_TYPE const1)\n""{\n"" size_t i = get_global_id(0);\n"" size_t gridSize = get_local_size(0)*get_num_groups(0);\n"" while(i < numElements)\n"" {\n"" output[i] = FUNCTIONNAME(i+indexOffset, const1);\n"" i += gridSize;\n"" }\n""}\n") |

| static std::string | GenerateKernel_CL_Matrix ("__kernel void GenerateKernel_Matrix_KERNELNAME(__global TYPE* output, size_t numElements, size_t xsize, size_t ysize, size_t yoffset, CONST_TYPE const1)\n""{\n"" size_t xindex = get_global_id(0);\n"" size_t yindex = get_global_id(1);\n"" size_t i = yindex*xsize + xindex; \n"" if(i < numElements && xindex<xsize && yindex <ysize)\n"" {\n"" output[i] = FUNCTIONNAME(xindex, yindex+yoffset, const1);\n"" }\n""}\n") |

| template<typename T , typename GenerateFunc > | |

| __global__ void | GenerateKernel_CU (GenerateFunc generateFunc, T *output, size_t numElements, size_t indexOffset) |

| template<typename T , typename GenerateFunc > | |

| __global__ void | GenerateKernel_CU_Matrix (GenerateFunc generateFunc, T *output, size_t numElements, size_t xsize, size_t ysize, size_t yoffset) |

| static const std::string | trimSpaces (const std::string &pString, const std::string &pWhitespace=" \t") |

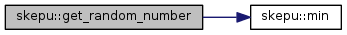

| template<typename T > | |

| T | get_random_number (T min, T max) |

| static std::string | read_file_into_string (const std::string &filename) |

| static void | toLowerCase (std::string &str) |

| static void | toUpperCase (std::string &str) |

| static bool | startsWith (const std::string &main, const std::string &prefix) |

| template<typename T > | |

| void | allocateHostMemory (T *&data, const size_t numElems) |

| template<typename T > | |

| void | deallocateHostMemory (T *data) |

| static std::string | UnaryMapKernel_CL ("__kernel void UnaryMapKernel_KERNELNAME(__global TYPE* input, __global TYPE* output, size_t numElements, CONST_TYPE const1)\n""{\n"" int i = get_global_id(0);\n"" size_t gridSize = get_local_size(0)*get_num_groups(0);\n"" while(i < numElements)\n"" {\n"" output[i] = FUNCTIONNAME(input[i], const1);\n"" i += gridSize;\n"" }\n""}\n") |

| static std::string | BinaryMapKernel_CL ("__kernel void BinaryMapKernel_KERNELNAME(__global TYPE* input1, __global TYPE* input2, __global TYPE* output, size_t n, CONST_TYPE const1)\n""{\n"" int i = get_global_id(0);\n"" size_t gridSize = get_local_size(0)*get_num_groups(0);\n"" while(i < n)\n"" {\n"" output[i] = FUNCTIONNAME(input1[i], input2[i], const1);\n"" i += gridSize;\n"" }\n""}\n") |

| static std::string | TrinaryMapKernel_CL ("__kernel void TrinaryMapKernel_KERNELNAME(__global TYPE* input1, __global TYPE* input2, __global TYPE* input3, __global TYPE* output, size_t n, CONST_TYPE const1)\n""{\n"" int i = get_global_id(0);\n"" size_t gridSize = get_local_size(0)*get_num_groups(0);\n"" while(i < n)\n"" {\n"" output[i] = FUNCTIONNAME(input1[i], input2[i], input3[i], const1);\n"" i += gridSize;\n"" }\n""}\n") |

| template<typename T , typename UnaryFunc > | |

| __global__ void | MapKernelUnary_CU (UnaryFunc mapFunc, T *input, T *output, size_t n) |

| template<typename T , typename BinaryFunc > | |

| __global__ void | MapKernelBinary_CU (BinaryFunc mapFunc, T *input1, T *input2, T *output, size_t n) |

| template<typename T , typename TrinaryFunc > | |

| __global__ void | MapKernelTrinary_CU (TrinaryFunc mapFunc, T *input1, T *input2, T *input3, T *output, size_t n) |

| static std::string | MapArrayKernel_CL ("__kernel void MapArrayKernel_KERNELNAME(__global TYPE* input1, __global TYPE* input2, __global TYPE* output, size_t n, CONST_TYPE const1)\n""{\n"" size_t i = get_global_id(0);\n"" size_t gridSize = get_local_size(0)*get_num_groups(0);\n"" while(i < n)\n"" {\n"" output[i] = FUNCTIONNAME(&input1[0], input2[i], const1);\n"" i += gridSize;\n"" }\n""}\n") |

| static std::string | MapArrayKernel_CL_Matrix_Blockwise ("__kernel void MapArrayKernel_Matrix_Blockwise_KERNELNAME(__global TYPE* input1, __global TYPE* input2, __global TYPE* output, size_t outSize, size_t p2BlockSize, CONST_TYPE const1)\n""{\n"" size_t i = get_global_id(0);\n"" size_t gridSize = get_local_size(0)*get_num_groups(0);\n"" if(i < outSize)\n"" {\n"" output[i] = FUNCTIONNAME(&input1[0], &input2[i*p2BlockSize], const1);\n"" i += gridSize;\n"" }\n""}\n") |

| static std::string | MapArrayKernel_CL_Sparse_Matrix_Blockwise ("__kernel void MapArrayKernel_Sparse_Matrix_Blockwise_KERNELNAME(__global TYPE* input1, __global TYPE* in2_values, __global size_t *in2_row_offsets, __global size_t *in2_col_indices, __global TYPE* output, size_t outSize, size_t indexOffset, CONST_TYPE const1)\n""{\n"" size_t i = get_global_id(0);\n"" size_t gridSize = get_local_size(0)*get_num_groups(0);\n"" if(i < outSize)\n"" {\n"" size_t rowId = in2_row_offsets[i] - indexOffset;\n"" size_t row2Id = in2_row_offsets[i+1] - indexOffset;\n"" output[i] = FUNCTIONNAME(&input1[0], &in2_values[rowId], (row2Id-rowId), &in2_col_indices[rowId], const1);\n"" i += gridSize;\n"" }\n""}\n") |

| static std::string | MapArrayKernel_CL_Matrix ("__kernel void MapArrayKernel_Matrix_KERNELNAME(__global TYPE* input1, __global TYPE* input2, __global TYPE* output, size_t n, size_t xsize, size_t ysize, size_t yoffset, CONST_TYPE const1)\n""{\n"" size_t xindex = get_global_id(0);\n"" size_t yindex = get_global_id(1);\n"" size_t i = yindex*xsize + xindex; \n"" if(i < n && xindex<xsize && yindex <ysize)\n"" {\n"" output[i] = FUNCTIONNAME(&input1[0], input2[i], xindex, yindex+yoffset, const1);\n"" }\n""}\n") |

| template<typename T , typename ArrayFunc > | |

| __global__ void | MapArrayKernel_CU (ArrayFunc mapArrayFunc, T *input1, T *input2, T *output, size_t n) |

| template<typename T , typename ArrayFunc > | |

| __global__ void | MapArrayKernel_CU_Matrix_Blockwise (ArrayFunc mapArrayFunc, T *input1, T *input2, T *output, size_t outSize, size_t p2BlockSize) |

| template<typename T , typename ArrayFunc > | |

| __global__ void | MapArrayKernel_CU_Sparse_Matrix_Blockwise (ArrayFunc mapArrayFunc, T *input1, T *in2_values, size_t *in2_row_offsets, size_t *in2_col_indices, T *output, size_t outSize, size_t indexOffset) |

| template<typename T , typename ArrayFunc > | |

| __global__ void | MapArrayKernel_CU_Matrix (ArrayFunc mapArrayFunc, T *input1, T *input2, T *output, size_t n, size_t xsize, size_t ysize, size_t yoffset) |

| static std::string | MatrixConvolSharedFilter_CL ("__kernel void conv_opencl_shared_filter_KERNELNAME(__global TYPE* input, __global TYPE* output, __constant TYPE* filter, size_t in_rows, size_t in_cols, size_t out_rows, size_t out_cols, size_t filter_rows, size_t filter_cols, size_t in_pitch, size_t out_pitch, size_t sharedRows, size_t sharedCols, __local TYPE* sdata)\n""{\n"" size_t xx = ( (size_t)(get_global_id(0)/get_local_size(0))) * get_local_size(0);\n"" size_t yy = ( (size_t)(get_global_id(1)/get_local_size(1))) * get_local_size(1);\n"" size_t x = get_global_id(0);\n"" size_t y = get_global_id(1);\n"" if(x<(out_cols+filter_cols-1) && y<(out_rows+filter_rows-1))\n"" {\n"" size_t sharedIdx = get_local_id(1) * sharedCols + get_local_id(0);\n"" sdata[sharedIdx]= input[y*in_pitch + x];\n"" size_t shared_x= get_local_id(0)+get_local_size(0);\n"" size_t shared_y= get_local_id(1);\n"" while(shared_y<sharedRows)\n"" {\n"" while(shared_x<sharedCols)\n"" {\n"" sharedIdx = shared_y * sharedCols + shared_x; \n"" sdata[sharedIdx]= input[(yy+shared_y) * in_pitch + xx + shared_x];\n"" shared_x = shared_x + get_local_size(0);\n"" }\n"" shared_x = get_local_id(0);\n"" shared_y = shared_y + get_local_size(1);\n"" } \n"" }\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(x<out_cols && y<out_rows)\n"" {\n"" TYPE sum=0;\n"" for(size_t j=0;j<filter_rows;j++) \n"" {\n"" for(size_t i=0;i<filter_cols;i++) \n"" {\n"" sum += sdata[(get_local_id(1)+j) * sharedCols + (get_local_id(0)+i) ] * filter[j*filter_cols+i];\n"" }\n"" }\n"" output[y*out_pitch+x] = sum / (filter_rows * filter_cols);\n"" }\n""}") |

| static std::string | MatrixConvol2D_CL ("__kernel void conv_opencl_2D_KERNELNAME(__global TYPE* input, __global TYPE* output, size_t out_rows, size_t out_cols, size_t filter_rows, size_t filter_cols, size_t in_pitch, size_t out_pitch, size_t stride, size_t sharedRows, size_t sharedCols, __local TYPE* sdata)\n""{\n"" size_t xx = ( (size_t)(get_global_id(0)/get_local_size(0))) * get_local_size(0);\n"" size_t yy = ( (size_t)(get_global_id(1)/get_local_size(1))) * get_local_size(1);\n"" size_t x = get_global_id(0);\n"" size_t y = get_global_id(1);\n"" if(x<(out_cols+filter_cols-1) && y<(out_rows+filter_rows-1))\n"" {\n"" size_t sharedIdx = get_local_id(1) * sharedCols + get_local_id(0);\n"" sdata[sharedIdx]= input[y*in_pitch + x];\n"" size_t shared_x= get_local_id(0)+get_local_size(0);\n"" size_t shared_y= get_local_id(1);\n"" while(shared_y<sharedRows)\n"" {\n"" while(shared_x<sharedCols)\n"" {\n"" sharedIdx = shared_y * sharedCols + shared_x; \n"" sdata[sharedIdx]= input[(yy+shared_y) * in_pitch + xx + shared_x];\n"" shared_x = shared_x + get_local_size(0);\n"" }\n"" shared_x = get_local_id(0);\n"" shared_y = shared_y + get_local_size(1);\n"" } \n"" }\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(x<out_cols && y<out_rows)\n"" {\n"" output[y*out_pitch+x] = FUNCTIONNAME(&(sdata[(get_local_id(1)+(filter_rows/2)) * sharedCols + (get_local_id(0)+(filter_cols/2))]), stride);\n"" }\n""}") |

| static std::string | MatrixConvolShared_CL ("__kernel void conv_opencl_shared_KERNELNAME(__global TYPE* input, __global TYPE* output, size_t in_rows, size_t in_cols, size_t out_rows, size_t out_cols, size_t filter_rows, size_t filter_cols, size_t in_pitch, size_t out_pitch, size_t sharedRows, size_t sharedCols, __local TYPE* sdata)\n""{\n"" size_t xx = ( (size_t)(get_global_id(0)/get_local_size(0))) * get_local_size(0);\n"" size_t yy = ( (size_t)(get_global_id(1)/get_local_size(1))) * get_local_size(1);\n"" size_t x = get_global_id(0);\n"" size_t y = get_global_id(1);\n"" if(x<(out_cols+filter_cols-1) && y<(out_rows+filter_rows-1))\n"" {\n"" size_t sharedIdx = get_local_id(1) * sharedCols + get_local_id(0);\n"" sdata[sharedIdx]= input[y*in_pitch + x];\n"" size_t shared_x= get_local_id(0)+get_local_size(0);\n"" size_t shared_y= get_local_id(1);\n"" while(shared_y<sharedRows)\n"" {\n"" while(shared_x<sharedCols)\n"" {\n"" sharedIdx = shared_y * sharedCols + shared_x; \n"" sdata[sharedIdx]= input[(yy+shared_y) * in_pitch + xx + shared_x];\n"" shared_x = shared_x + get_local_size(0);\n"" }\n"" shared_x = get_local_id(0);\n"" shared_y = shared_y + get_local_size(1);\n"" } \n"" }\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(x<out_cols && y<out_rows)\n"" {\n"" TYPE sum=0;\n"" for(size_t j=0;j<filter_rows;j++) \n"" {\n"" for(size_t i=0;i<filter_cols;i++) \n"" {\n"" sum += sdata[(get_local_id(1)+j) * sharedCols + (get_local_id(0)+i) ];\n"" }\n"" }\n"" output[y*out_pitch+x] = sum / (filter_rows * filter_cols);\n"" }\n""}") |

| template<typename T > | |

| T | max (T a, T b) |

| template<typename T > | |

| T | min (T a, T b) |

| template<typename T > | |

| size_t | calculateTiling (size_t regCountPerThread, size_t filterSizeX, size_t filterSizeY, size_t inputSizeX, bool maximizeTiling=false) |

| template<typename T , typename OverlapFunc > | |

| __global__ void | conv_cuda_2D_kernel (OverlapFunc mapOverlapFunc, T *input, T *output, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_kernel (T *input, T *output, const size_t in_rows, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_tiling_kernel (T *input, T *output, const size_t numTiles, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_tiling_2_kernel (T *input, T *output, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_tiling_4_kernel (T *input, T *output, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_tiling_6_kernel (T *input, T *output, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_tiling_8_kernel (T *input, T *output, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_tiling_10_kernel (T *input, T *output, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_tiling_12_kernel (T *input, T *output, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_tiling_14_kernel (T *input, T *output, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| template<bool useFilter, typename T > | |

| __global__ void | conv_cuda_shared_tiling_16_kernel (T *input, T *output, const size_t in_cols, const size_t out_rows, const size_t out_cols, const size_t filter_rows, const size_t filter_cols, size_t in_pitch, size_t out_pitch, const size_t sharedRows, const size_t sharedCols) |

| static std::string | MapOverlapKernel_CL ("__kernel void MapOverlapKernel_KERNELNAME(__global TYPE* input, __global TYPE* output, __global TYPE* wrap, size_t n, size_t overlap, size_t out_offset, size_t out_numelements, int poly, TYPE pad, __local TYPE* sdata)\n""{\n"" size_t tid = get_local_id(0);\n"" size_t i = get_group_id(0) * get_local_size(0) + get_local_id(0);\n"" if(poly == 0)\n"" {\n"" sdata[overlap+tid] = (i < n) ? input[i] : pad;\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (get_group_id(0) == 0) ? pad : input[i-overlap];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != get_num_groups(0)-1 && i+overlap < n) ? input[i+overlap] : pad;\n"" }\n"" }\n"" else if(poly == 1)\n"" {\n"" if(i < n)\n"" {\n"" sdata[overlap+tid] = input[i];\n"" }\n"" else if(i-n < overlap)\n"" {\n"" sdata[overlap+tid] = wrap[overlap+(i-n)];\n"" }\n"" else\n"" {\n"" sdata[overlap+tid] = pad;\n"" }\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (get_group_id(0) == 0) ? wrap[tid] : input[i-overlap];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != get_num_groups(0)-1 && i+overlap < n) ? input[i+overlap] : wrap[overlap+(i+overlap-n)];\n"" }\n"" }\n"" else if(poly == 2)\n"" {\n"" sdata[overlap+tid] = (i < n) ? input[i] : input[n-1];\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (get_group_id(0) == 0) ? input[0] : input[i-overlap];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != get_num_groups(0)-1 && i+overlap < n) ? input[i+overlap] : input[n-1];\n"" }\n"" }\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if( (i >= out_offset) && (i < out_offset+out_numelements) )\n"" {\n"" output[i-out_offset] = FUNCTIONNAME(&(sdata[tid+overlap]));\n"" }\n""}\n") |

| static std::string | MapOverlapKernel_CL_Matrix_Row ("__kernel void MapOverlapKernel_MatRowWise_KERNELNAME(__global TYPE* input, __global TYPE* output, __global TYPE* wrap, size_t n, size_t overlap, size_t out_offset, size_t out_numelements, int poly, TYPE pad, size_t blocksPerRow, size_t rowWidth, __local TYPE* sdata)\n""{\n"" size_t tid = get_local_id(0);\n"" size_t i = get_group_id(0) * get_local_size(0) + get_local_id(0);\n"" size_t wrapIndex= 2 * overlap * (int)(get_group_id(0)/blocksPerRow);\n"" size_t tmp= (get_group_id(0) % blocksPerRow);\n"" size_t tmp2= (get_group_id(0) / blocksPerRow);\n"" if(poly == 0)\n"" {\n"" sdata[overlap+tid] = (i < n) ? input[i] : pad;\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (tmp==0) ? pad : input[i-overlap];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != (get_num_groups(0)-1) && (i+overlap < n) && tmp!=(blocksPerRow-1)) ? input[i+overlap] : pad;\n"" }\n"" }\n"" else if(poly == 1)\n"" {\n"" if(i < n)\n"" {\n"" sdata[overlap+tid] = input[i];\n"" }\n"" else if(i-n < overlap)\n"" {\n"" sdata[overlap+tid] = wrap[(overlap+(i-n))+ wrapIndex];\n"" }\n"" else\n"" {\n"" sdata[overlap+tid] = pad;\n"" }\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (tmp==0) ? wrap[tid+wrapIndex] : input[i-overlap];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != (get_num_groups(0)-1) && i+overlap < n && tmp!=(blocksPerRow-1)) ? input[i+overlap] : wrap[overlap+wrapIndex+(tid+overlap-get_local_size(0))];\n"" }\n"" }\n"" else if(poly == 2)\n"" {\n"" sdata[overlap+tid] = (i < n) ? input[i] : input[n-1];\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (tmp==0) ? input[tmp2*rowWidth] : input[i-overlap];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != (get_num_groups(0)-1) && (i+overlap < n) && (tmp!=(blocksPerRow-1))) ? input[i+overlap] : input[(tmp2+1)*rowWidth-1];\n"" }\n"" }\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if( (i >= out_offset) && (i < out_offset+out_numelements) )\n"" {\n"" output[i-out_offset] = FUNCTIONNAME(&(sdata[tid+overlap]));\n"" }\n""}\n") |

| static std::string | MapOverlapKernel_CL_Matrix_Col ("__kernel void MapOverlapKernel_MatColWise_KERNELNAME(__global TYPE* input, __global TYPE* output, __global TYPE* wrap, size_t n, size_t overlap, size_t out_offset, size_t out_numelements, int poly, TYPE pad, size_t blocksPerCol, size_t rowWidth, size_t colWidth, __local TYPE* sdata)\n""{\n"" size_t tid = get_local_id(0);\n"" size_t i = get_group_id(0) * get_local_size(0) + get_local_id(0);\n"" size_t wrapIndex= 2 * overlap * (int)(get_group_id(0)/blocksPerCol);\n"" size_t tmp= (get_group_id(0) % blocksPerCol);\n"" size_t tmp2= (get_group_id(0) / blocksPerCol);\n"" size_t arrInd = (tid + tmp*get_local_size(0))*rowWidth + tmp2;\n"" if(poly == 0)\n"" {\n"" sdata[overlap+tid] = (i < n) ? input[arrInd] : pad;\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (tmp==0) ? pad : input[(arrInd-(overlap*rowWidth))];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != (get_num_groups(0)-1) && (arrInd+(overlap*rowWidth)) < n && (tmp!=(blocksPerCol-1))) ? input[(arrInd+(overlap*rowWidth))] : pad;\n"" }\n"" }\n"" else if(poly == 1)\n"" {\n"" if(i < n)\n"" {\n"" sdata[overlap+tid] = input[arrInd];\n"" }\n"" else if(i-n < overlap)\n"" {\n"" sdata[overlap+tid] = wrap[(overlap+(i-n))+ wrapIndex];\n"" }\n"" else\n"" {\n"" sdata[overlap+tid] = pad;\n"" }\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (tmp==0) ? wrap[tid+wrapIndex] : input[(arrInd-(overlap*rowWidth))];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != (get_num_groups(0)-1) && (arrInd+(overlap*rowWidth)) < n && (tmp!=(blocksPerCol-1))) ? input[(arrInd+(overlap*rowWidth))] : wrap[overlap+wrapIndex+(tid+overlap-get_local_size(0))];\n"" }\n"" }\n"" else if(poly == 2)\n"" {\n"" sdata[overlap+tid] = (i < n) ? input[arrInd] : input[n-1];\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (tmp==0) ? input[tmp2] : input[(arrInd-(overlap*rowWidth))];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != (get_num_groups(0)-1) && (arrInd+(overlap*rowWidth)) < n && (tmp!=(blocksPerCol-1))) ? input[(arrInd+(overlap*rowWidth))] : input[tmp2+(colWidth-1)*rowWidth];\n"" }\n"" }\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if( (arrInd >= out_offset) && (arrInd < out_offset+out_numelements) )\n"" {\n"" output[arrInd-out_offset] = FUNCTIONNAME(&(sdata[tid+overlap]));\n"" }\n""}\n") |

| static std::string | MapOverlapKernel_CL_Matrix_ColMulti ("__kernel void MapOverlapKernel_MatColWiseMulti_KERNELNAME(__global TYPE* input, __global TYPE* output, __global TYPE* wrap, size_t n, size_t overlap, size_t in_offset, size_t out_numelements, int poly, int deviceType, TYPE pad, size_t blocksPerCol, size_t rowWidth, size_t colWidth, __local TYPE* sdata)\n""{\n"" size_t tid = get_local_id(0);\n"" size_t i = get_group_id(0) * get_local_size(0) + get_local_id(0);\n"" size_t wrapIndex= 2 * overlap * (int)(get_group_id(0)/blocksPerCol);\n"" size_t tmp= (get_group_id(0) % blocksPerCol);\n"" size_t tmp2= (get_group_id(0) / blocksPerCol);\n"" size_t arrInd = (tid + tmp*get_local_size(0))*rowWidth + tmp2;\n"" if(poly == 0)\n"" {\n"" sdata[overlap+tid] = (i < n) ? input[arrInd+in_offset] : pad;\n"" if(deviceType == -1)\n"" {\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (tmp==0) ? pad : input[(arrInd-(overlap*rowWidth))];\n"" }\n"" \n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = input[(arrInd+in_offset+(overlap*rowWidth))];\n"" }\n"" }\n"" else if(deviceType == 0) \n"" {\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = input[arrInd];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = input[(arrInd+in_offset+(overlap*rowWidth))];\n"" }\n"" }\n"" else if(deviceType == 1)\n"" {\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = input[arrInd];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != (get_num_groups(0)-1) && (arrInd+(overlap*rowWidth)) < n && (tmp!=(blocksPerCol-1))) ? input[(arrInd+in_offset+(overlap*rowWidth))] : pad;\n"" }\n"" }\n"" }\n"" else if(poly == 1)\n"" {\n"" sdata[overlap+tid] = (i < n) ? input[arrInd+in_offset] : ((i-n < overlap) ? wrap[(i-n)+ (overlap * tmp2)] : pad);\n"" if(deviceType == -1)\n"" {\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (tmp==0) ? wrap[tid+(overlap * tmp2)] : input[(arrInd-(overlap*rowWidth))];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = input[(arrInd+in_offset+(overlap*rowWidth))];\n"" }\n"" }\n"" else if(deviceType == 0)\n"" {\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = input[arrInd];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = input[(arrInd+in_offset+(overlap*rowWidth))];\n"" }\n"" }\n"" else if(deviceType == 1)\n"" {\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = input[arrInd];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != (get_num_groups(0)-1) && (arrInd+(overlap*rowWidth)) < n && (tmp!=(blocksPerCol-1))) ? input[(arrInd+in_offset+(overlap*rowWidth))] : wrap[(overlap * tmp2)+(tid+overlap-get_local_size(0))];\n"" }\n"" }\n"" }\n"" else if(poly == 2)\n"" {\n"" sdata[overlap+tid] = (i < n) ? input[arrInd+in_offset] : input[n+in_offset-1];\n"" if(deviceType == -1)\n"" {\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = (tmp==0) ? input[tmp2] : input[(arrInd-(overlap*rowWidth))];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = input[(arrInd+in_offset+(overlap*rowWidth))];\n"" }\n"" }\n"" else if(deviceType == 0)\n"" {\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = input[arrInd];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = input[(arrInd+in_offset+(overlap*rowWidth))];\n"" }\n"" }\n"" else if(deviceType == 1)\n"" {\n"" if(tid < overlap)\n"" {\n"" sdata[tid] = input[arrInd];\n"" }\n"" if(tid >= (get_local_size(0)-overlap))\n"" {\n"" sdata[tid+2*overlap] = (get_group_id(0) != (get_num_groups(0)-1) && (arrInd+(overlap*rowWidth)) < n && (tmp!=(blocksPerCol-1))) ? input[(arrInd+in_offset+(overlap*rowWidth))] : input[tmp2+in_offset+(colWidth-1)*rowWidth];\n"" }\n"" }\n"" }\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if( arrInd < out_numelements )\n"" {\n"" output[arrInd] = FUNCTIONNAME(&(sdata[tid+overlap]));\n"" }\n""}\n") |

| template<typename T > | |

| __global__ void | transpose (T *odata, T *idata, size_t width, size_t height) |

| template<int poly, typename T , typename OverlapFunc > | |

| __global__ void | MapOverlapKernel_CU (OverlapFunc mapOverlapFunc, T *input, T *output, T *wrap, size_t n, size_t out_offset, size_t out_numelements, T pad) |

| template<int poly, typename T , typename OverlapFunc > | |

| __global__ void | MapOverlapKernel_CU_Matrix_Row (OverlapFunc mapOverlapFunc, T *input, T *output, T *wrap, size_t n, size_t out_offset, size_t out_numelements, T pad, size_t blocksPerRow, size_t rowWidth) |

| template<int poly, typename T , typename OverlapFunc > | |

| __global__ void | MapOverlapKernel_CU_Matrix_Col (OverlapFunc mapOverlapFunc, T *input, T *output, T *wrap, size_t n, size_t out_offset, size_t out_numelements, T pad, size_t blocksPerCol, size_t rowWidth, size_t colWidth) |

| template<int poly, int deviceType, typename T , typename OverlapFunc > | |

| __global__ void | MapOverlapKernel_CU_Matrix_ColMulti (OverlapFunc mapOverlapFunc, T *input, T *output, T *wrap, size_t n, size_t in_offset, size_t out_numelements, T pad, size_t blocksPerCol, size_t rowWidth, size_t colWidth) |

| static std::string | UnaryMapReduceKernel_CL ("__kernel void UnaryMapReduceKernel_KERNELNAME(__global TYPE* input, __global TYPE* output, size_t n, __local TYPE* sdata, CONST_TYPE const1)\n""{\n"" size_t blockSize = get_local_size(0);\n"" size_t tid = get_local_id(0);\n"" size_t i = get_group_id(0)*blockSize + get_local_id(0);\n"" size_t gridSize = blockSize*get_num_groups(0);\n"" TYPE result = 0;\n"" if(i < n)\n"" {\n"" result = FUNCTIONNAME_MAP(input[i], const1);\n"" i += gridSize;\n"" }\n"" while(i < n)\n"" {\n"" TYPE tempMap;\n"" tempMap = FUNCTIONNAME_MAP(input[i], const1);\n"" result = FUNCTIONNAME_REDUCE(result, tempMap, (TYPE)0);\n"" i += gridSize;\n"" }\n"" sdata[tid] = result;\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(blockSize >= 512) { if (tid < 256 && tid + 256 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 256], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 256) { if (tid < 128 && tid + 128 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 128], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 128) { if (tid < 64 && tid + 64 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 64], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 64) { if (tid < 32 && tid + 32 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 32], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 32) { if (tid < 16 && tid + 16 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 16], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 16) { if (tid < 8 && tid + 8 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 8], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 8) { if (tid < 4 && tid + 4 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 4], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 4) { if (tid < 2 && tid + 2 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 2], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 2) { if (tid < 1 && tid + 1 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 1], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(tid == 0)\n"" {\n"" output[get_group_id(0)] = sdata[tid];\n"" }\n""}\n") |

| static std::string | BinaryMapReduceKernel_CL ("__kernel void BinaryMapReduceKernel_KERNELNAME(__global TYPE* input1, __global TYPE* input2, __global TYPE* output, size_t n, __local TYPE* sdata, CONST_TYPE const1)\n""{\n"" size_t blockSize = get_local_size(0);\n"" size_t tid = get_local_id(0);\n"" size_t i = get_group_id(0)*blockSize + get_local_id(0);\n"" size_t gridSize = blockSize*get_num_groups(0);\n"" TYPE result = 0;\n"" if(i < n)\n"" {\n"" result = FUNCTIONNAME_MAP(input1[i], input2[i], const1);\n"" i += gridSize;\n"" }\n"" while(i < n)\n"" {\n"" TYPE tempMap;\n"" tempMap = FUNCTIONNAME_MAP(input1[i], input2[i], const1);\n"" result = FUNCTIONNAME_REDUCE(result, tempMap, (TYPE)0);\n"" i += gridSize;\n"" }\n"" sdata[tid] = result;\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(blockSize >= 512) { if (tid < 256 && tid + 256 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 256], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 256) { if (tid < 128 && tid + 128 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 128], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 128) { if (tid < 64 && tid + 64 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 64], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 64) { if (tid < 32 && tid + 32 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 32], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 32) { if (tid < 16 && tid + 16 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 16], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 16) { if (tid < 8 && tid + 8 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 8], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 8) { if (tid < 4 && tid + 4 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 4], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 4) { if (tid < 2 && tid + 2 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 2], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 2) { if (tid < 1 && tid + 1 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 1], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(tid == 0)\n"" {\n"" output[get_group_id(0)] = sdata[tid];\n"" }\n""}\n") |

| static std::string | TrinaryMapReduceKernel_CL ("__kernel void TrinaryMapReduceKernel_KERNELNAME(__global TYPE* input1, __global TYPE* input2, __global TYPE* input3, __global TYPE* output, size_t n, __local TYPE* sdata, CONST_TYPE const1)\n""{\n"" size_t blockSize = get_local_size(0);\n"" size_t tid = get_local_id(0);\n"" size_t i = get_group_id(0)*blockSize + get_local_id(0);\n"" size_t gridSize = blockSize*get_num_groups(0);\n"" TYPE result = 0;\n"" if(i < n)\n"" {\n"" result = FUNCTIONNAME_MAP(input1[i], input2[i], input3[i], const1);\n"" i += gridSize;\n"" }\n"" while(i < n)\n"" {\n"" TYPE tempMap;\n"" tempMap = FUNCTIONNAME_MAP(input1[i], input2[i], input3[i], const1);\n"" result = FUNCTIONNAME_REDUCE(result, tempMap, (TYPE)0);\n"" i += gridSize;\n"" }\n"" sdata[tid] = result;\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(blockSize >= 512) { if (tid < 256 && tid + 256 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 256], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 256) { if (tid < 128 && tid + 128 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 128], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 128) { if (tid < 64 && tid + 64 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 64], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 64) { if (tid < 32 && tid + 32 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 32], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 32) { if (tid < 16 && tid + 16 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 16], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 16) { if (tid < 8 && tid + 8 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 8], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 8) { if (tid < 4 && tid + 4 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 4], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 4) { if (tid < 2 && tid + 2 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 2], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 2) { if (tid < 1 && tid + 1 < n) { sdata[tid] = FUNCTIONNAME_REDUCE(sdata[tid], sdata[tid + 1], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(tid == 0)\n"" {\n"" output[get_group_id(0)] = sdata[tid];\n"" }\n""}\n") |

| template<typename T , typename UnaryFunc , typename BinaryFunc > | |

| __global__ void | MapReduceKernel1_CU (UnaryFunc mapFunc, BinaryFunc reduceFunc, T *input, T *output, size_t n) |

| template<typename T , typename BinaryFunc1 , typename BinaryFunc2 > | |

| __global__ void | MapReduceKernel2_CU (BinaryFunc1 mapFunc, BinaryFunc2 reduceFunc, T *input1, T *input2, T *output, size_t n) |

| template<typename T , typename TrinaryFunc , typename BinaryFunc > | |

| __global__ void | MapReduceKernel3_CU (TrinaryFunc mapFunc, BinaryFunc reduceFunc, T *input1, T *input2, T *input3, T *output, size_t n) |

| template<typename T > | |

| __global__ void | transposeNaive (T *odata, T *idata, size_t width, size_t height) |

| A näive CUDA kernel to take Matrix transpose. | |

| template<typename T > | |

| __global__ void | transposeNoBankConflicts (T *odata, T *idata, size_t width, size_t height) |

| An optimized CUDA kernel to take Matrix transpose. | |

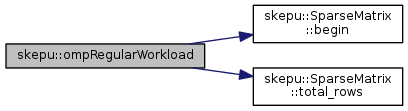

| template<typename ReduceFunc , typename T > | |

| void | ompRegularWorkload (ReduceFunc *reduceFunc, SparseMatrix< T > &input, T *result_array, const unsigned int &numThreads) |

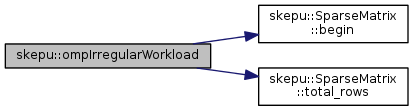

| template<typename ReduceFunc , typename T > | |

| void | ompIrregularWorkload (ReduceFunc *reduceFunc, SparseMatrix< T > &input, T *result_array) |

| static std::string | ReduceKernel_CL ("__kernel void ReduceKernel_KERNELNAME(__global TYPE* input, __global TYPE* output, size_t n, __local TYPE* sdata)\n""{\n"" size_t blockSize = get_local_size(0);\n"" size_t tid = get_local_id(0);\n"" size_t i = get_group_id(0)*blockSize + get_local_id(0);\n"" size_t gridSize = blockSize*get_num_groups(0);\n"" TYPE result = 0;\n"" if(i < n)\n"" {\n"" result = input[i];\n"" i += gridSize;\n"" }\n"" while(i < n)\n"" {\n"" result = FUNCTIONNAME(result, input[i], (TYPE)0);\n"" i += gridSize;\n"" }\n"" sdata[tid] = result;\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(blockSize >= 512) { if (tid < 256 && tid + 256 < n) { sdata[tid] = FUNCTIONNAME(sdata[tid], sdata[tid + 256], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 256) { if (tid < 128 && tid + 128 < n) { sdata[tid] = FUNCTIONNAME(sdata[tid], sdata[tid + 128], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 128) { if (tid < 64 && tid + 64 < n) { sdata[tid] = FUNCTIONNAME(sdata[tid], sdata[tid + 64], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 64) { if (tid < 32 && tid + 32 < n) { sdata[tid] = FUNCTIONNAME(sdata[tid], sdata[tid + 32], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 32) { if (tid < 16 && tid + 16 < n) { sdata[tid] = FUNCTIONNAME(sdata[tid], sdata[tid + 16], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 16) { if (tid < 8 && tid + 8 < n) { sdata[tid] = FUNCTIONNAME(sdata[tid], sdata[tid + 8], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 8) { if (tid < 4 && tid + 4 < n) { sdata[tid] = FUNCTIONNAME(sdata[tid], sdata[tid + 4], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 4) { if (tid < 2 && tid + 2 < n) { sdata[tid] = FUNCTIONNAME(sdata[tid], sdata[tid + 2], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(blockSize >= 2) { if (tid < 1 && tid + 1 < n) { sdata[tid] = FUNCTIONNAME(sdata[tid], sdata[tid + 1], (TYPE)0); } barrier(CLK_LOCAL_MEM_FENCE); }\n"" if(tid == 0)\n"" {\n"" output[get_group_id(0)] = sdata[tid];\n"" }\n""}\n") |

| size_t | nextPow2 (size_t x) |

| A helper to return a value that is nearest value that is power of 2. More... | |

| void | getNumBlocksAndThreads (size_t n, size_t maxBlocks, size_t maxThreads, size_t &blocks, size_t &threads) |

| template<typename T , typename BinaryFunc > | |

| __global__ void | ReduceKernel_CU_oldAndIncorrect (BinaryFunc reduceFunc, T *input, T *output, size_t n) |

| template<typename T , typename BinaryFunc , size_t blockSize, bool nIsPow2> | |

| __global__ void | ReduceKernel_CU (BinaryFunc reduceFunc, T *input, T *output, size_t n) |

| bool | isPow2 (size_t x) |

| A small helper to determine whether the number is a power of 2. More... | |

| template<typename ReduceFunc , typename T > | |

| void | CallReduceKernel (ReduceFunc *reduceFunc, size_t size, size_t numThreads, size_t numBlocks, T *d_idata, T *d_odata, bool enableIsPow2=true) |

| template<typename ReduceFunc , typename T > | |

| void | ExecuteReduceOnADevice (ReduceFunc *reduceFunc, size_t n, size_t numThreads, size_t numBlocks, size_t maxThreads, size_t maxBlocks, T *d_idata, T *d_odata, unsigned int deviceID, bool enableIsPow2=true) |

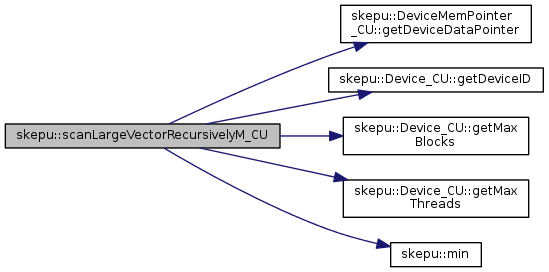

| template<typename BinaryFunc , typename T > | |

| static T | scanLargeVectorRecursivelyM_CU (DeviceMemPointer_CU< T > *input, DeviceMemPointer_CU< T > *output, std::vector< DeviceMemPointer_CU< T > * > &blockSums, size_t numElements, unsigned int level, ScanType type, T init, Device_CU *device, BinaryFunc scanFunc) |

| static std::string | ScanKernel_CL ("__kernel void ScanKernel_KERNELNAME(__global TYPE* input, __global TYPE* output, __global TYPE* blockSums, size_t n, size_t numElements, __local TYPE* sdata)\n""{\n"" size_t threadIdx = get_local_id(0);\n"" size_t blockDim = get_local_size(0);\n"" size_t blockIdx = get_group_id(0);\n"" size_t gridDim = get_num_groups(0);\n"" size_t thid = threadIdx;\n"" unsigned int pout = 0;\n"" unsigned int pin = 1;\n"" size_t mem = get_global_id(0);\n"" size_t blockNr = blockIdx;\n"" size_t gridSize = blockDim*gridDim;\n"" size_t numBlocks = numElements/(blockDim) + (numElements%(blockDim) == 0 ? 0:1);\n"" size_t offset;\n"" while(blockNr < numBlocks)\n"" {\n"" sdata[pout*n+thid] = (mem < numElements) ? input[mem] : 0;\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" for(offset = 1; offset < n; offset *=2)\n"" {\n"" pout = 1-pout;\n"" pin = 1-pout;\n"" if(thid >= offset)\n"" sdata[pout*n+thid] = FUNCTIONNAME(sdata[pin*n+thid], sdata[pin*n+thid-offset], (TYPE)0);\n"" else\n"" sdata[pout*n+thid] = sdata[pin*n+thid];\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" }\n"" if(thid == blockDim - 1)\n"" blockSums[blockNr] = sdata[pout*n+blockDim-1];\n"" if(mem < numElements)\n"" output[mem] = sdata[pout*n+thid];\n"" mem += gridSize;\n"" blockNr += gridDim;\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" }\n""}\n") |

| static std::string | ScanUpdate_CL ("__kernel void ScanUpdate_KERNELNAME(__global TYPE* data, __global TYPE* sums, int isInclusive, TYPE init, size_t n, __global TYPE* ret, __local TYPE* sdata)\n""{\n"" __local TYPE offset;\n"" __local TYPE inc_offset;\n"" size_t threadIdx = get_local_id(0);\n"" size_t blockDim = get_local_size(0);\n"" size_t blockIdx = get_group_id(0);\n"" size_t gridDim = get_num_groups(0);\n"" size_t thid = threadIdx;\n"" size_t blockNr = blockIdx;\n"" size_t gridSize = blockDim*gridDim;\n"" size_t mem = get_global_id(0);\n"" size_t numBlocks = n/(blockDim) + (n%(blockDim) == 0 ? 0:1);\n"" while(blockNr < numBlocks)\n"" {\n"" if(thid == 0)\n"" {\n"" if(isInclusive == 0)\n"" {\n"" offset = init;\n"" if(blockNr > 0)\n"" {\n"" offset = FUNCTIONNAME(offset, sums[blockNr-1], (TYPE)0);\n"" inc_offset = sums[blockNr-1];\n"" }\n"" }\n"" else\n"" {\n"" if(blockNr > 0)\n"" offset = sums[blockNr-1];\n"" }\n"" }\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(isInclusive == 1)\n"" {\n"" if(blockNr > 0)\n"" sdata[thid] = (mem < n) ? FUNCTIONNAME(offset, data[mem], (TYPE)0) : 0;\n"" else\n"" sdata[thid] = (mem < n) ? data[mem] : 0;\n"" if(mem == n-1)\n"" *ret = sdata[thid];\n"" }\n"" else\n"" {\n"" if(mem == n-1)\n"" *ret = FUNCTIONNAME(inc_offset, data[mem], (TYPE)0);\n"" if(thid == 0)\n"" sdata[thid] = offset;\n"" else\n"" sdata[thid] = (mem-1 < n) ? FUNCTIONNAME(offset, data[mem-1], (TYPE)0) : 0;\n"" }\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" if(mem < n)\n"" data[mem] = sdata[thid];\n"" mem += gridSize;\n"" blockNr += gridDim;\n"" barrier(CLK_LOCAL_MEM_FENCE);\n"" }\n""}\n") |

| static std::string | ScanAdd_CL ("__kernel void ScanAdd_KERNELNAME(__global TYPE* data, TYPE sum, size_t n)\n""{\n"" size_t i = get_global_id(0);\n"" size_t gridSize = get_local_size(0)*get_num_groups(0);\n"" while(i < n)\n"" {\n"" data[i] = FUNCTIONNAME(data[i], sum, (TYPE)0);\n"" i += gridSize;\n"" }\n""}\n") |

| template<typename T , typename BinaryFunc > | |

| __global__ void | ScanKernel_CU (BinaryFunc scanFunc, T *input, T *output, T *blockSums, size_t n, size_t numElements) |

| template<typename T , typename BinaryFunc > | |

| __global__ void | ScanUpdate_CU (BinaryFunc scanFunc, T *data, T *sums, int isInclusive, T init, size_t n, T *ret) |

| template<typename T , typename BinaryFunc > | |

| __global__ void | ScanAdd_CU (BinaryFunc scanFunc, T *data, T sum, size_t n) |

| template<typename T > | |

| void | ExecuteReduceOnADevice (size_t n, const size_t &numThreads, const size_t &numBlocks, _cl_mem *&in_p, _cl_mem *&out_p, cl_kernel &kernel, Device_CL *device) |

| void | replaceTextInString (std::string &text, std::string find, std::string replace) |

| void | printCLError (cl_int Err, std::string s=std::string()) |

| std::ostream & | operator<< (std::ostream &os, ExecPlanNew< 1 > &plan) |

| std::ostream & | operator<< (std::ostream &os, ExecPlanNew< 2 > &plan) |

| std::ostream & | operator<< (std::ostream &os, const std::vector< size_t > &vec) |

| template<typename StructType , typename StructType2 > | |

| void | cpu_tune_wrapper_map (void *arg) |

| Do training execution for a single performance context for Map skeleton and sequential CPU implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | cpu_tune_wrapper_reduce (void *arg) |

| Do training execution for a single performance context for Reduce skeleton and sequential CPU implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | cpu_tune_wrapper_mapoverlap (void *arg) |

| Do training execution for a single performance context for MapOverlap skeleton and sequential CPU implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | cpu_tune_wrapper_maparray (void *arg) |

| Do training execution for a single performance context for MapArray skeleton and sequential CPU implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | cpu_tune_wrapper_mapreduce (void *arg) |

| Do training execution for a single performance context for MapReduce skeleton and sequential CPU implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | omp_tune_wrapper_map (void *arg) |

| the following section contains function that can train OpenMP implementations. Only enabled when OpenMP is enabled in SkePU library More... | |

| template<typename StructType , typename StructType2 > | |

| void | omp_tune_wrapper_reduce (void *arg) |

| Do training execution for a single performance context for Reduce skeleton and parallel OpenMP implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | omp_tune_wrapper_mapoverlap (void *arg) |

| Do training execution for a single performance context for MapOverlap skeleton and parallel OpenMP implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | omp_tune_wrapper_maparray (void *arg) |

| Do training execution for a single performance context for MapArray skeleton and parallel OpenMP implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | omp_tune_wrapper_mapreduce (void *arg) |

| Do training execution for a single performance context for MapReduce skeleton and parallel OpenMP implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | cuda_tune_wrapper_map (void *arg) |

| the following functions train for CUDA implementations for different skeletons... More... | |

| template<typename StructType , typename StructType2 > | |

| void | cuda_tune_wrapper_reduce (void *arg) |

| Do training execution for a single performance context for Reduce skeleton and CUDA implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | cuda_tune_wrapper_mapoverlap (void *arg) |

| Do training execution for a single performance context for MapOverlap skeleton and CUDA implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | cuda_tune_wrapper_maparray (void *arg) |

| Do training execution for a single performance context for MapArray skeleton and CUDA implementation. More... | |

| template<typename StructType , typename StructType2 > | |

| void | cuda_tune_wrapper_mapreduce (void *arg) |

| Do training execution for a single performance context for MapReduce skeleton and CUDA implementation. More... | |

| void | createDefaultConfiguration (BackEndParams &bp) |

| A helper function that creates the default configuration. More... | |

| bool | loadExecPlan (std::string id, ExecPlan &plan) |

| Loads an execution plan for a file into the structure passes as argument. More... | |

| bool | storeExecPlan (std::string id, const ExecPlan &plan) |

| Stores an execution plan for the structure passed as argument to a file. More... | |

| bool | loadExecPlanArray (std::string id, ExecPlan *planArray) |

| Loads execution plans for a file into the structure passes as argument. More... | |

| bool | storeExecPlanArray (std::string id, const ExecPlan *planArray, unsigned int nImpls) |

| Stores execution plans for the structure passed as argument to a file. More... | |

The main namespace for SkePU library.

All classes and functions in the SkePU library are in this namespace.

| enum skepu::AccessType |

Can be used to specify whether the access is row-wise or column-wise.

Used in some cases to mention type of access required in a certain operation.

| enum skepu::OverlapPolicy |

Enumeration of the different edge policies (what happens when a read outside the vector is performed) that the map overlap skeletons support.

| enum skepu::ReducePolicy |

Can be used to specify the direction of reduce for 2D containers.

Used in reduction operations for 2D containers.

| enum skepu::ScanType |

Enumeration of the two types of Scan that can be performed: Inclusive and Exclusive.

Can be used to specify the input format for a sparse matrix that is supplied in constructor.

Used to load sparse matrix from existing files.

| void skepu::allocateHostMemory | ( | T *& | data, |

| const size_t | numElems | ||

| ) |

Method to allocate host memory of a given size. Can do pinned memory allocation if enabled.

| bool skepu::cudaPeerToPeerMemAccess | ( | int | gpuId1, |

| int | gpuId2 | ||

| ) |

A helper function to check if peer-to-peer mem transfers can be done bwteen 2 gpus.. if yes, it enables it and return true, otherwise false....

| void skepu::deallocateHostMemory | ( | T * | data | ) |

Method to deallocate host memory.

| void skepu::ExecuteReduceOnADevice | ( | size_t | n, |

| const size_t & | numThreads, | ||

| const size_t & | numBlocks, | ||

| _cl_mem *& | in_p, | ||

| _cl_mem *& | out_p, | ||

| cl_kernel & | kernel, | ||

| Device_CL * | device | ||

| ) |

A helper function that is used to call the actual kernel for reduction. Used by other functions to call the actual kernel Internally, it just calls 2 kernels by setting their arguments. No synchronization is enforced.

| n | size of the input array to be reduced. |

| numThreads | Number of threads to be used for kernel execution. |

| numBlocks | Number of blocks to be used for kernel execution. |

| in_p | OpenCL memory pointer to input array. |

| out_p | OpenCL memory pointer to output array. |

| kernel | OpenCL kernel handle. |

| device | OpenCL device handle. |

References skepu::Device_CL::getQueue().

|

inline |

Method to get a random number between a given range.

References min().

Referenced by skepu::SparseMatrix< T >::SparseMatrix().

| std::string skepu::getDataTypeCL | ( | ) |

helper to return data type in a string format using template specialication technique.

| std::string skepu::getDataTypeCL< double > | ( | ) |

helper to return data type in a string format using template specialication technique.

| std::string skepu::getDataTypeCL< float > | ( | ) |

helper to return data type in a string format using template specialication technique.

| std::string skepu::getDataTypeCL< int > | ( | ) |

helper to return data type in a string format using template specialication technique.

| std::string skepu::getDataTypeCL< long > | ( | ) |

helper to return data type in a string format using template specialication technique.

| std::string skepu::getDataTypeCL< unsigned int > | ( | ) |

helper to return data type in a string format using template specialication technique.

| DevTimingStruct skepu::measureOrLoadCUDABandwidth | ( | int | gpuId, |

| bool | pinnedMemory = false |

||

| ) |

This function is called to measure or load bandwidth for a GPU

| gpuId | ID of the GPU for which to measure bandwidth |

| pinnedMemory | flag specifying whether to measure via pinned memory or not |

Referenced by skepu::Environment< T >::Environment().

| void skepu::ompIrregularWorkload | ( | ReduceFunc * | reduceFunc, |

| SparseMatrix< T > & | input, | ||

| T * | result_array | ||

| ) |

A function to do 1D reduction on OpenMP considering ir-regular work-load per row. Useful for un-structured sparse matrices.

References skepu::SparseMatrix< T >::begin(), and skepu::SparseMatrix< T >::total_rows().

| void skepu::ompRegularWorkload | ( | ReduceFunc * | reduceFunc, |

| SparseMatrix< T > & | input, | ||

| T * | result_array, | ||

| const unsigned int & | numThreads | ||

| ) |

A function to do 1D reduction on OpenMP considering regular work-load per row. Useful for 2D dense matrix as well as structured sparse matrices.

References skepu::SparseMatrix< T >::begin(), and skepu::SparseMatrix< T >::total_rows().

| std::ostream& skepu::operator<< | ( | std::ostream & | os, |

| const std::vector< size_t > & | vec | ||

| ) |

To display vector contents...

| void skepu::printCLError | ( | cl_int | Err, |

| std::string | s = std::string() |

||

| ) |

A helper function for OpenCL backends. It takes an OpenCL error code and prints the corresponding error message

| Err | OpenCL error |

| s | Optional text string that may give more information on the error source |

Referenced by skepu::MapOverlap2D< MapOverlap2DFunc >::CL(), skepu::Generate< GenerateFunc >::CL(), skepu::MapArray< MapArrayFunc >::CL(), and skepu::Reduce< ReduceFunc, ReduceFunc >::CL().

|

static |

Method to read text file data into a string.

Referenced by skepu::Environment< T >::createOpenCLProgramForMatrixTranspose().

| void skepu::replaceTextInString | ( | std::string & | text, |

| std::string | find, | ||

| std::string | replace | ||

| ) |

A helper function used by createOpenCLProgram(). It finds all instances of a string in another string and replaces it with a third string.

| text | A std::string which is searched. |

| find | The std::string which is searched for and replaced. |

| replace | The relpacement std::string. |

Referenced by skepu::Environment< T >::createOpenCLProgramForMatrixTranspose().

|

static |

Scans a Vector using the same recursive algorithm as NVIDIA SDK. First the vector is scanned producing partial results for each block. Then the function is called recursively to scan these partial results, which in turn can produce partial results and so on. This continues until only one block with partial results is left. Used by multi-GPU CUDA implementation.

| input | Pointer to the device memory where the input vector resides. |

| output | Pointer to the device memory where the output vector resides. |

| blockSums | A Vector of device memory pointers where the partial results for each level is stored. |

| numElements | The number of elements to scan. |

| level | The current recursion level. |

| type | The scan type, either INCLUSIVE or EXCLUSIVE. |

| init | The initialization value for exclusive scans. |

| device | Pointer to the device that will be used for the scan. |

| scanFunc | The user function used in the scan. |

References skepu::DeviceMemPointer_CU< T >::getDeviceDataPointer(), skepu::Device_CU::getDeviceID(), skepu::Device_CU::getMaxBlocks(), skepu::Device_CU::getMaxThreads(), and min().

Referenced by skepu::Scan< ScanFunc >::CU().

|

static |

Method to check whether a string starts with a given pattern.

|

static |

Method to convert to lower case.

|

static |

Method to convert to upper case.

|

static |

OpenCL Transpose kernel. Modified the transpose kernel provided by NVIDIA to make it work for any problem size rather than just perfect size such as 1024X1024.

Referenced by skepu::Environment< T >::createOpenCLProgramForMatrixTranspose().

|

static |

Method to remove leading and trailing spaces from a string.

Referenced by loadExecPlan(), and loadExecPlanArray().

1.8.5

1.8.5